Accountable Care Organizations, Data Federation and CMS’ Updated Final Rule for the Medicare Shared Savings Program

Monday, June 8th, 2015 CMS has published a final rule (http://federalregister.gov/a/2015-14005) focused on changes to the Medicare Shared Savings Program (MSSP) which impacts Accountable Care Organizations (ACO) significantly. There are a variety of interesting changes being made to the program. For this discussion I’m looking at CMS’ continual drive toward data use and integration as a basis for improving quality of care, gaining efficiency and cutting costs in health care. One way this drive is manifested in the new rule regards an ACO’s plans as related to “enabling technologies,” which is an umbrella term for leveraging electronic data.

CMS has published a final rule (http://federalregister.gov/a/2015-14005) focused on changes to the Medicare Shared Savings Program (MSSP) which impacts Accountable Care Organizations (ACO) significantly. There are a variety of interesting changes being made to the program. For this discussion I’m looking at CMS’ continual drive toward data use and integration as a basis for improving quality of care, gaining efficiency and cutting costs in health care. One way this drive is manifested in the new rule regards an ACO’s plans as related to “enabling technologies,” which is an umbrella term for leveraging electronic data.

As background, Subpart B (425.100 to 425.114) of the MSSP describes ACO eligibility requirements. Two of the changes in this section clearly underscore the importance of electronic data and data integration to the fundamental operation of an ACO. Specifically, looking at page 127, the following updates are being made to section 425.112(b)(4) (emphasis mine):

Therefore, we proposed to add a new requirement to the eligibility requirements under § 425.112(b)(4)(ii)(C) which would require an ACO to describe in its application how it will encourage and promote the use of enabling technologies for improving care coordination for beneficiaries. Such enabling technologies and services may include electronic health records and other health IT tools (such as population health management and data aggregation and analytic tools), telehealth services (including remote patient monitoring), health information exchange services, or other electronic tools to engage patients in their care.

It goes on to add:

Finally, we proposed to add a provision under § 425.112(b)(4)(ii)(E) to require that an ACO define and submit major milestones or performance targets it will use in each performance year to assess the progress of its ACO participants in implementing the elements required under § 425.112(b)(4). For instance, providers would be required to submit milestones and targets such as: projected dates for implementation of an electronic quality reporting infrastructure for participants;

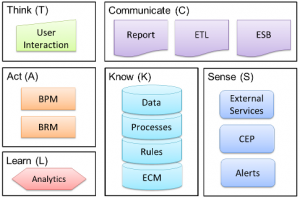

It is clear from the first change that an ACO must have a documented plan in place for continually expanding its use of electronic data and providing data visibility and integration between itself and its beneficiaries and providers. This is a tall order. The number of different systems and data formats along with myriad reporting and analytic platforms makes a traditional integration approach tedious at best and a significant business risk at worst.

The second change, keeping CMS apprised of the progress of data-centric projects, is clearly intended to keep the attention on these data publishing and integration projects. It won’t be enough to have a well-articulated plan, the ACO must be able to demonstrate progress on a regular basis.